What Is HappyHorse AI? Early Facts, Limits, and Prompt Opportunities

HappyHorse-1.0 has become one of the most discussed names in AI video because it appeared suddenly in early rankings and quickly triggered comparison posts across the industry. The problem is that public access and official documentation are still limited, so most creators face an execution gap: strong curiosity, weak practical guidance.

What appears to be publicly known

The safest framing is that HappyHorse is an early-stage, heavily discussed video model with reported strength in text-to-video, image-to-video, and synchronized audio output. Some industry articles also describe it as unusually fast for its quality tier.

That does not mean every technical claim circulating online is confirmed. For SEO and product positioning, it is better to use language like reported, observed in benchmarks, or discussed by industry media rather than treat every rumor as settled fact.

Why creators still struggle even when a model is hot

A breakout model creates demand before it creates documentation. Creators want examples, prompt patterns, and reusable structure immediately, but new models usually launch with sparse public prompt libraries and uneven community knowledge.

That means the real bottleneck is not hype. It is translation: turning a video style you admire into a prompt structure you can actually reuse.

Why reverse engineering is the practical workflow

When a model is not broadly accessible, reference videos become the most stable source of truth. You can inspect pacing, framing, subject motion, lighting, environment sound, and shot transitions even when official prompt guidance is missing.

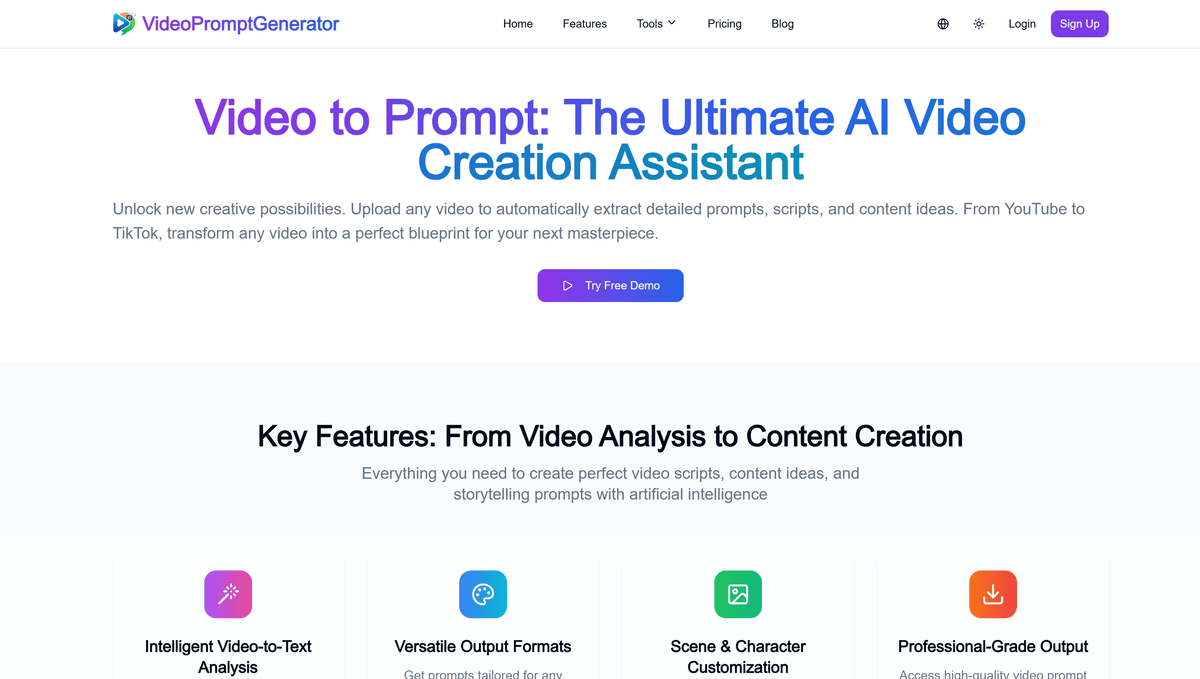

This is where a video-to-prompt workflow becomes useful. Instead of guessing abstract prompt language, you begin from a real clip and derive a prompt blueprint that is easier to test, adjust, and repurpose.

What to extract from a strong reference video

For most commercial or cinematic clips, the highest-value fields are subject, action, scene, lens feel, camera motion, rhythm, lighting, atmosphere, and audio cues. Those fields consistently carry over into higher quality prompt experiments.

The goal is not to copy a finished video word for word. The goal is to capture the generative structure that produced the result you want.

What to avoid in your own positioning

Do not imply that you have official HappyHorse access if you do not. Do not state unverified architecture details as facts. Do not promise compatibility that the product cannot deliver.

A more defensible position is simpler: help users derive better prompts from real videos while the ecosystem is still forming.